GOOGLE SCHOLAR LABS – Last week Google has launched a new artificial intelligence tool, Google Scholar Labs. It is designed to help researchers navigate complex academic questions.

Curated by Business Science Daily — peer-reviewed sources, human-verified.

Learn more

Curated by Business Science Daily — peer-reviewed sources, human-verified.

Learn more

About Our Curation Process

Business Science Daily curates academic research in business and economics. Each featured study is selected from reputable, peer-reviewed journals, institutional repositories, or working papers (e.g., Elsevier, Sage, NBER, SSRN).

Articles are carefully summarized to ensure clarity and accuracy, with direct citations or links to original sources. Our process emphasizes transparency, academic integrity, and accessibility for a broader audience.

Learn more in our Editorial Standards & AI Policy.

The tool moves beyond simple keyword matching. It analyses a research question to identify its key topics and relationships. It also searches through Google Scholar’s vast database, and evaluates the results to pinpoint the most relevant papers. For each selected paper, it provides a brief explanation of its relevance to the query.

The innovation, currently available to users, signals a significant step in applying generative AI to scholarly work. However, it immediately raises questions for scholars about how to integrate such powerful tools without undermining the integrity scholarly research.

These are the questions addressed in a recent editorial from the Journal of Management Studies (JMS). The paper, from the journal’s editors have introduced a comprehensive new policy to navigate this new landscape.

The Double-Edged Sword of AI in Research

The JMS editorial clearly lays out that AI’s impact is dual-edged, offering both significant augmentation and serious potential for harm. AI, acts as a super-powered literature review tool. It’s automating the arduous task of sifting through thousands of papers to find the most pertinent ones. Furthermore, the innovation supports the researcher by providing summaries and context, much like a highly efficient research assistant. It allows scholars to focus their limited time on deep reading and critical synthesis.

However, the JMS policy also warns of the inherent risks applying tools like Scholar Labs might have:

- The Risk of Inaccuracy: AI is known for hallucinations—generating persuasive but incorrect or nonsensical information. If a researcher were to treat the AI-generated summary of a paper as a substitute for reading it, they could base their work on a inaccurate understanding.

- The Loss of Human Judgment: Although the tool provides a list of papers, Its the scholar’s responsibility in choosing the key determinants. AI CANNOT detect which papers are truly foundational, choosing the methodologies are sound, and how the literature fits together into a novel theoretical framework.

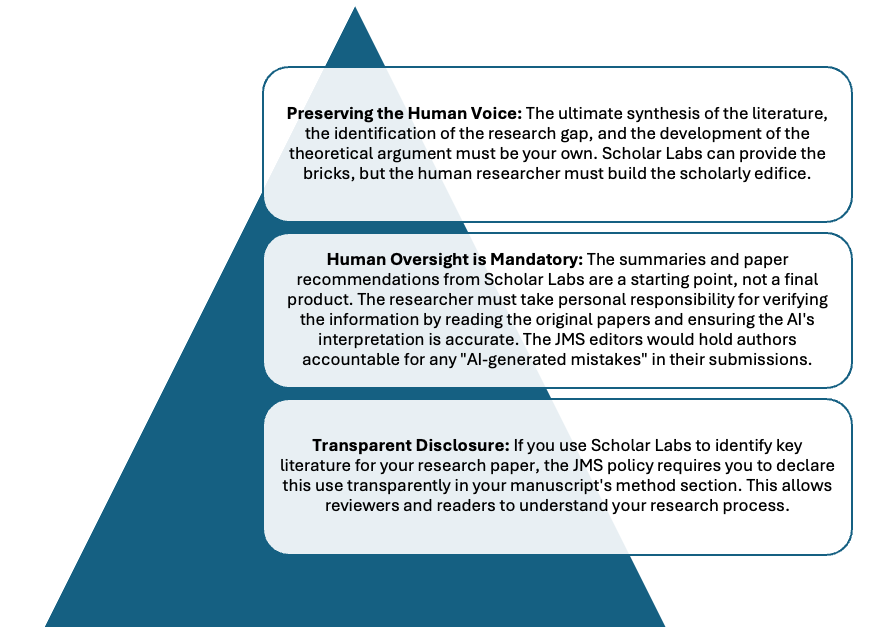

The JMS Framework for Responsible AI Use

In response to these challenges, the JMS policy establishes a clear framework built on Transparency, Oversight, and Human Responsibility.

A Powerful Tool, with Guardrails

The launch of Google Scholar Labs marks an exciting leap forward, bringing the power of generative AI directly into the researcher’s workflow. It exemplifies the research with AI trend that is transforming academia.

However, as the Journal of Management Studies so cogently argues, the new AI fueled search engines must be used with care.

By following the principles of transparency, critical oversight, and ultimate human responsibility—as outlined in the JMS policy—researchers can leverage innovations like Google Scholar Labs to enhance their work’s efficiency and depth, while safeguarding the integrity and intellectual contribution that define true scholarly achievement.

Reference

Gatrell, C., Muzio, D., Post, C., & Wickert, C. (2024). Here, there and everywhere: On the responsible use of artificial intelligence (AI) in management research and the peer‐review process. Journal of Management Studies, 61(3), 739-751.